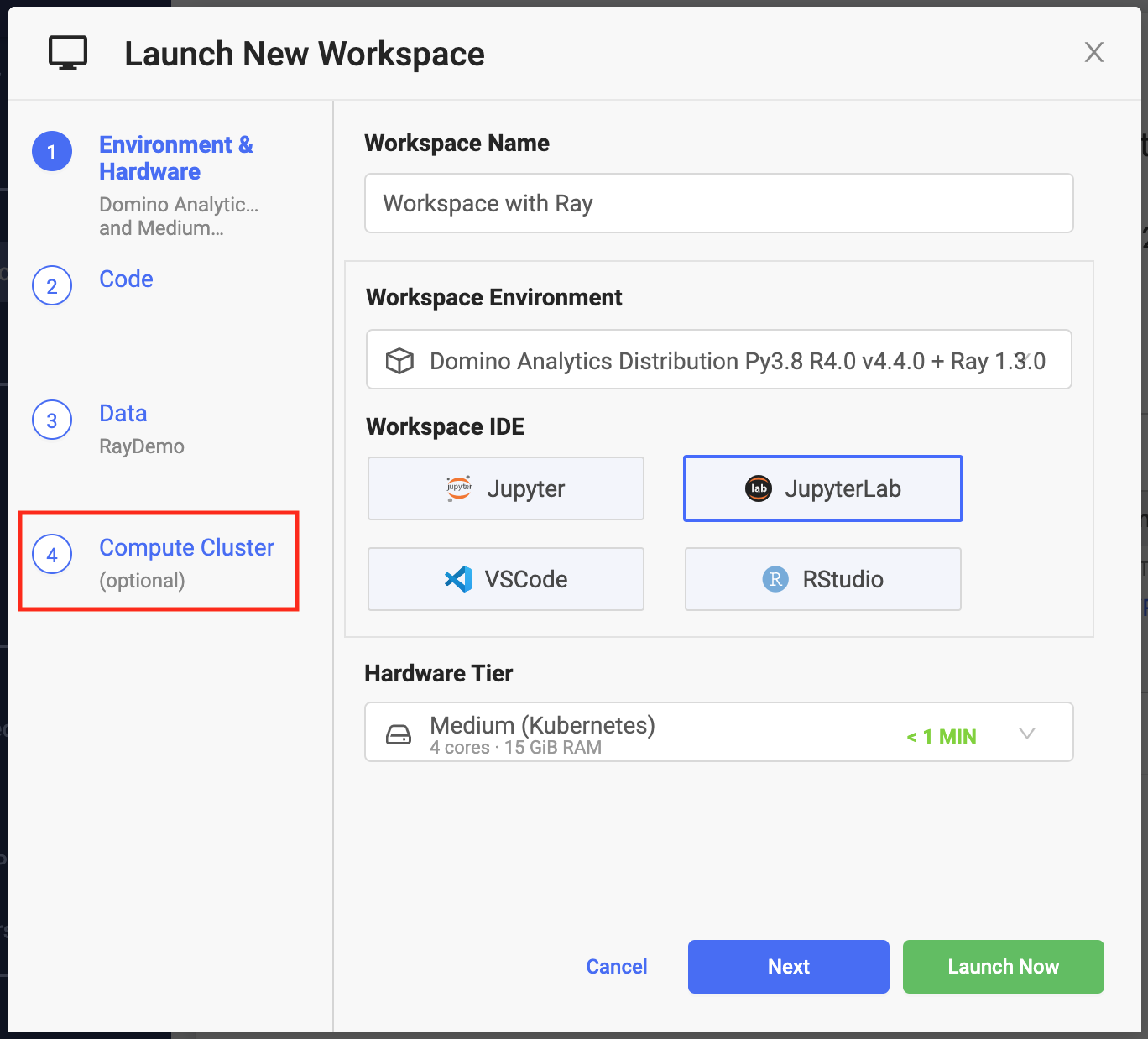

Create an on-demand Ray cluster attached to a Domino Workspace:

-

Go to Workspaces > New Workspace.

-

From Launch New Workspace, select the Compute Cluster step.

-

Specify the cluster settings and launch your workspace. After the workspace is up, it will have access to the Ray cluster you configured.

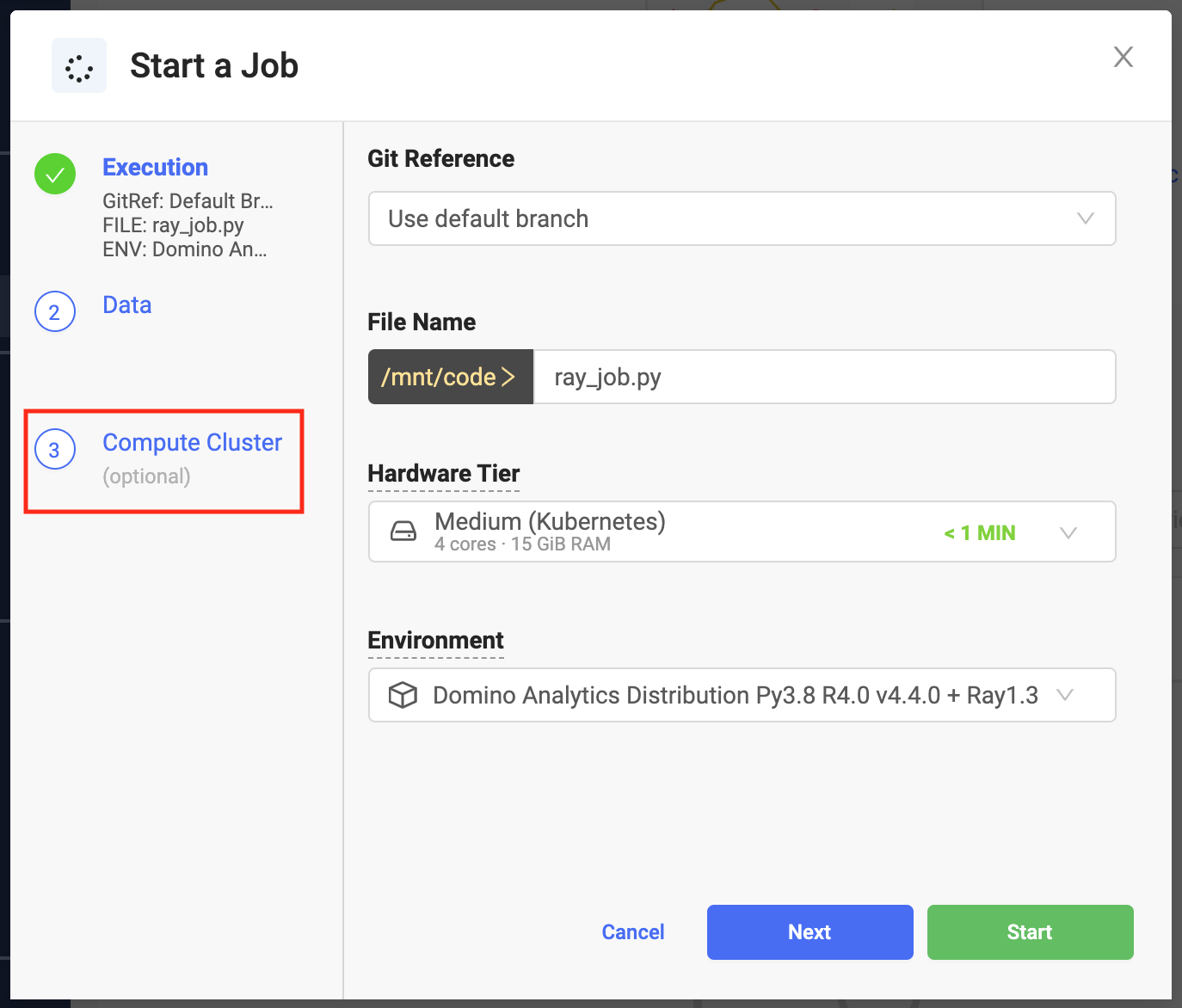

Create an on-demand Ray cluster attached to a Domino Job:

-

Go to Jobs > Run.

-

From Start a Job, select the Compute Cluster step.

-

Specify the cluster settings and launch your job. The job will have access to the Ray cluster you configured.

You can use any Python script that interacts with your Ray cluster.

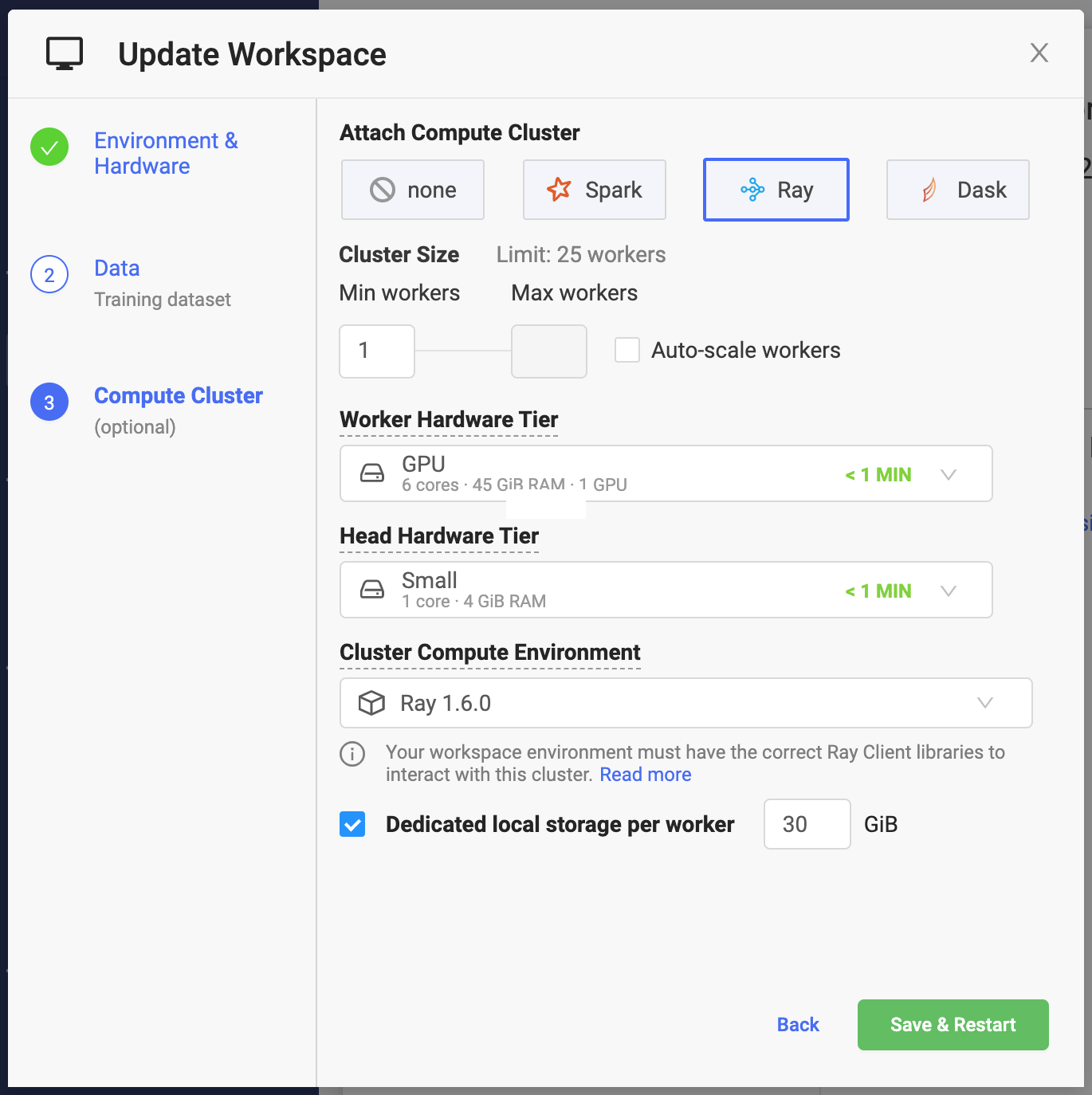

Set the following cluster settings:

- Number of Workers

-

The number of Ray node workers in the Ray cluster when it starts. The combined capacity of the workers will be available for your workloads.

- Workers

-

The maximum number of Ray node workers that the cluster can reach when Auto-scale workers is enabled. See cluster autoscaling.

- Quota Max

The maximum number of workers that you can make available to your cluster is limited by the number of per-user executions that your Domino administrator has configured for your deployment or by the maximum simultaneous executions of the underlying Hardware Tier used for workers.

In addition to the number of Ray node workers, you will need one slot for your cluster master and one slot for your workspace or job.

- Worker Hardware Tier

-

The amount of compute resources (CPU, GPU, and memory) that will be made available to each Ray node worker.

- Head Hardware Tier

-

Same mechanics as the worker hardware tier, but applied to the resources that will be available for your Ray cluster head node.

The Ray head node coordinates the Ray workers, so it does not need a significant amount of CPU resources. It will host the Ray Global Control Store. The amount of required memory will depend on the complexity of your application.

- Cluster Compute Environment

-

Designates your compute environment for the Ray cluster.

- Dedicated local storage per executor

-

The amount of dedicated storage in Gigabytes (2^30 bytes) that will be available to each Ray worker.

The storage will be automatically mounted to

/tmp.The storage will be automatically provisioned when the cluster is created and de-provisioned when it is shut down.

WarningThe local storage per worker must not be used for storing any data that must be available after the cluster is shut down.

When provisioning your on-demand Ray cluster, Domino sets up environment variables that hold the information needed to connect to your cluster.

Use the following snippet to connect:

|

Note

|

You will not use |

import ray

import ray.util

import os

...

if ray.is_initialized() == False:

service_host = os.environ["RAY_HEAD_SERVICE_HOST"]

service_port = os.environ["RAY_HEAD_SERVICE_PORT"]

ray.util.connect(f"{service_host}:{service_port}")Ray provides a built-in dashboard with access to metrics, charts, and other features that helps users understand the Ray cluster, libraries, and workloads.

Use the dashboard to:

-

View cluster metrics.

-

View logs, error, and exceptions across many machines in a single pane.

-

View resource utilization, tasks, and logs per node and per actor.

-

Kill actors and profile Ray jobs.

-

See tune jobs and trial information.

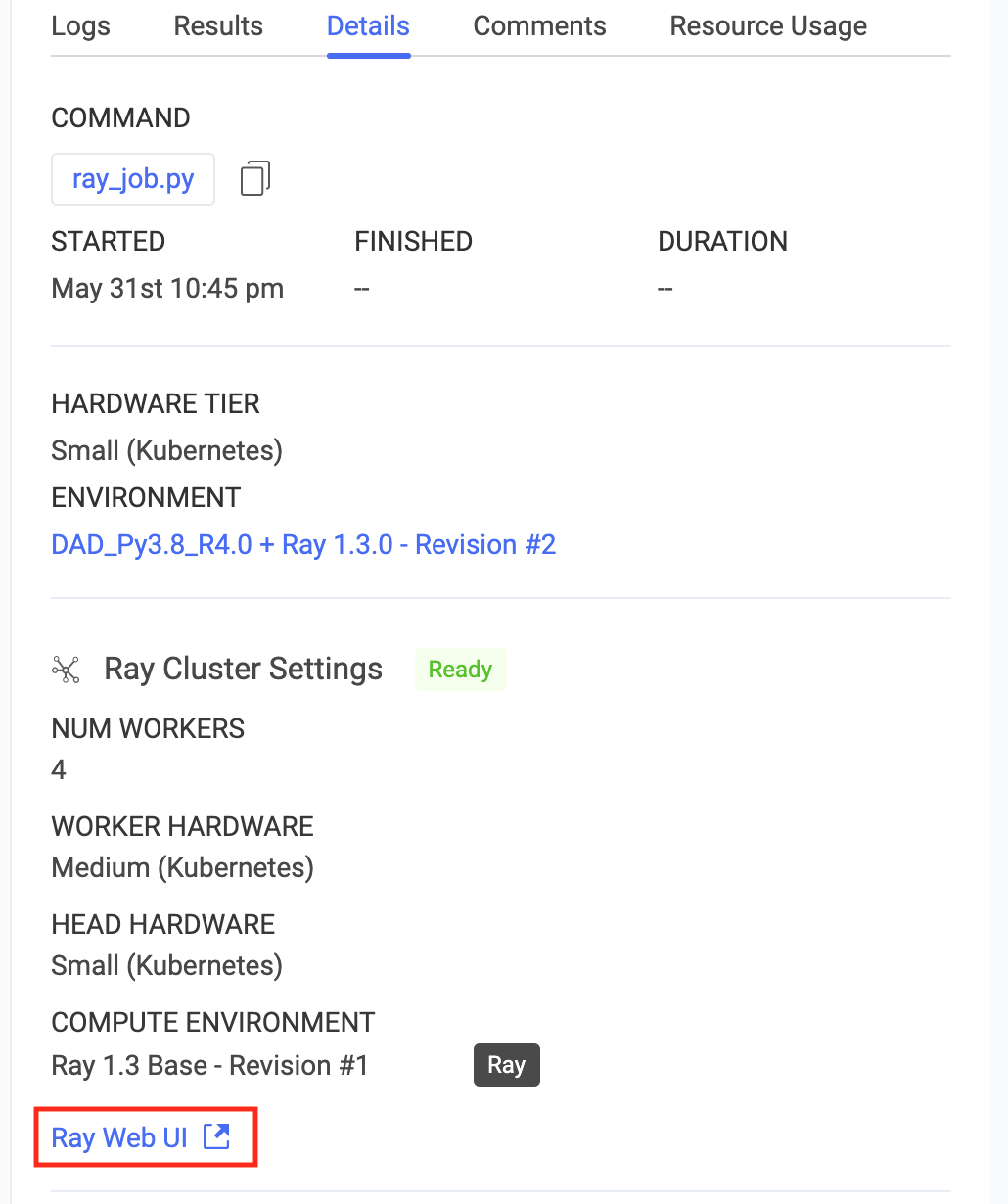

Domino makes the Ray web UI available for active on-demand clusters attached to both workspaces and jobs.

On workspace or job startup, a Domino on-demand Ray cluster with its cluster settings is automatically provisioned and attached to the workspace or job as soon as the cluster becomes available.

When the workspace or job terminates, the on-demand Ray cluster and all associated resources are automatically terminated and deprovisioned. This includes any compute resources and storage allocated for the cluster.

The on-demand Ray clusters created by Domino are not meant to be shared between users. Each cluster is associated with a given workspace or a job instance. Access to the cluster and the Ray web UI is restricted only to users who can access the workspace or the job attached to it. This restriction is enforced at the networking level and the cluster can only be reached from the execution that provisioned it.