Use Domino’s integrated model review process to ensure that production models meet organizational policies and regulations, and avoid unintended business impacts.

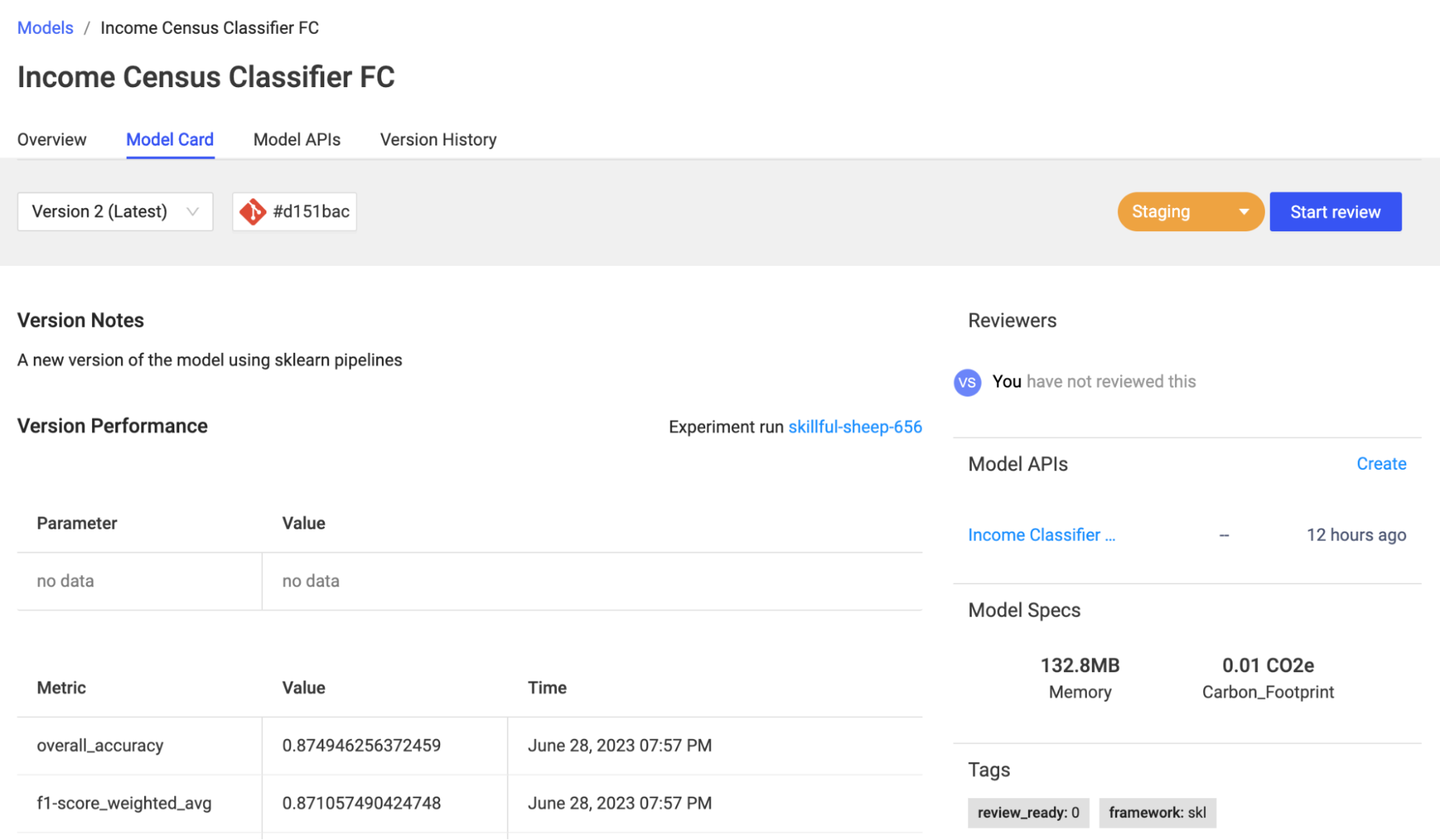

Domino uses model cards to track model lineage to create a system of record that helps you evaluate a model’s accuracy, fairness, compliance, and auditability. Project owners can collaborate with multiple stakeholders on these cards, ensuring security with fine-grained access control.

Learn about how Domino supports the different aspects of model governance.

Accountability: Domino ensures clear role & responsibility assignment with its access control policy based on attributes and actions associated with model creation and management.

Transparency & reproducibility: Domino records the exact code, environment, workspace settings, datasets, and data sources for each experiment and associates them with the published model. This data is easily accessible in every model card to help you understand exactly what went into a model and how to reproduce it.

Accuracy & monitoring: Domino offers prebuilt and customizable environments, to let users embed best-in-class explainability solutions to report model fairness and bias for every training job. Customizable model cards can present these reports, giving all collaborators visibility into critical metrics like feature importance, cohort impact, and bias evaluations. Additionally, registered models deployed to a Model API can take advantage of automatic model drift detection to ensure continuous accuracy.

-

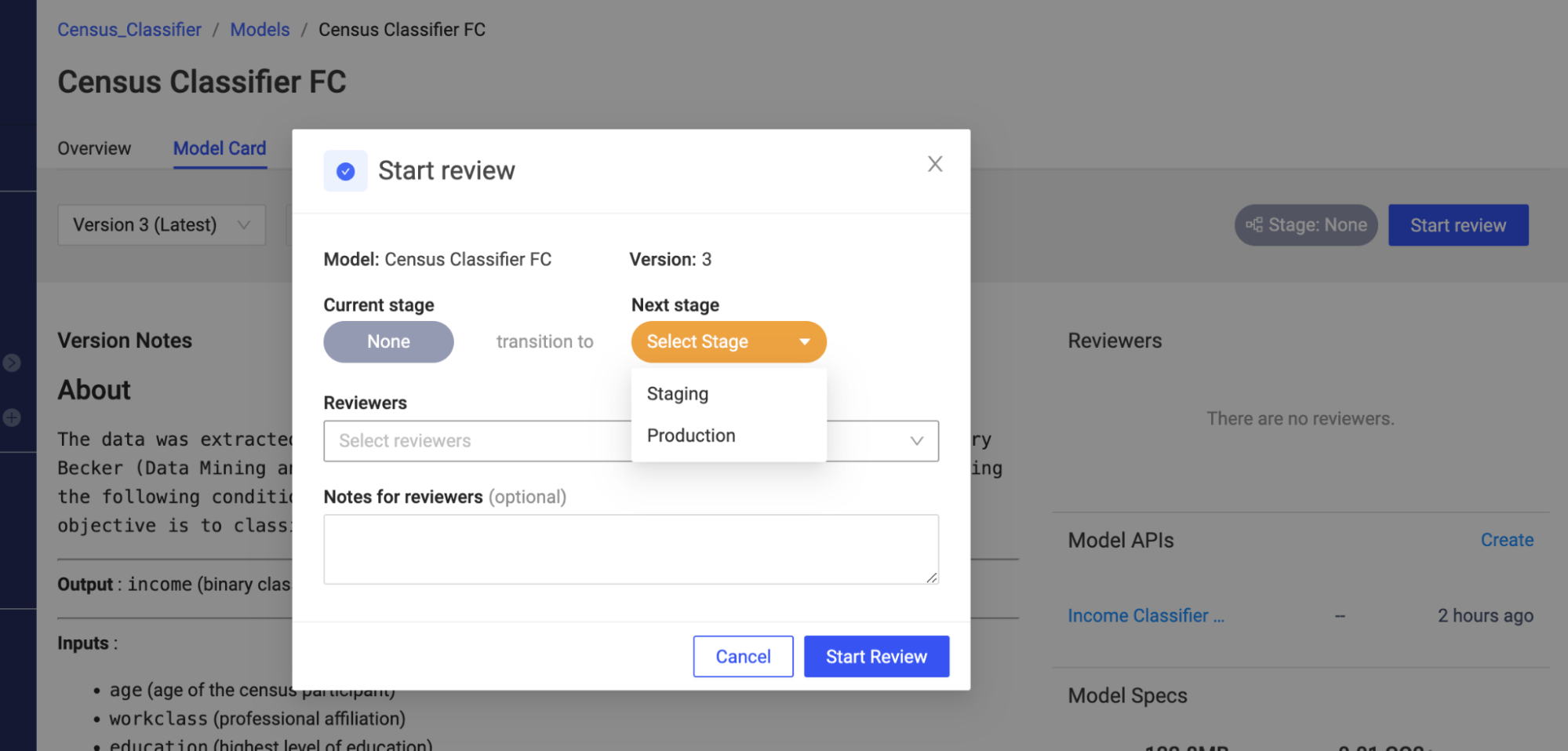

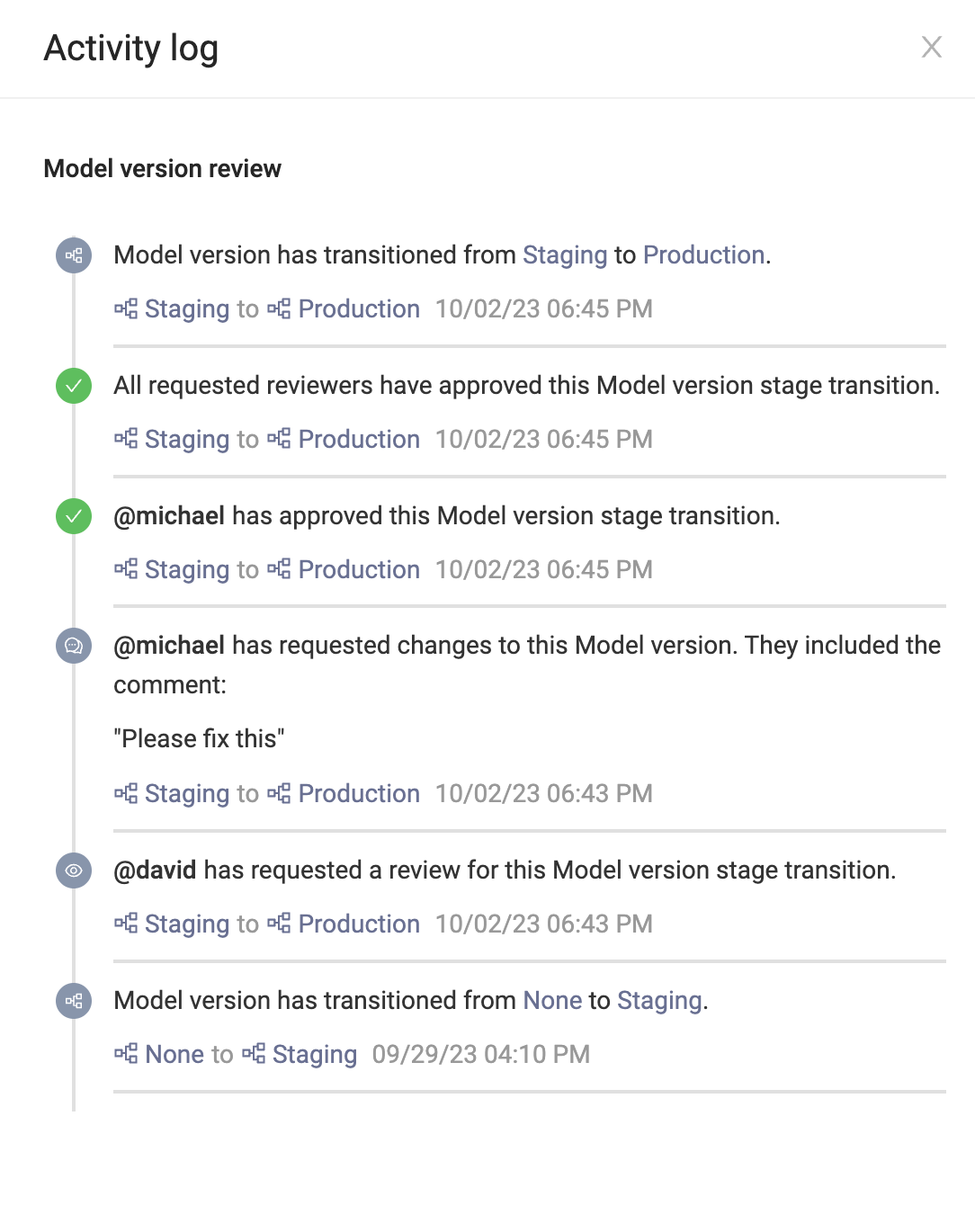

Initiate the review: Project owners and collaborators can request a review from reviewers (fellow project members). The requester creates a note for the reviewers and sets the desired stage for the model, for example, "Staging" or "Production".

-

Review notification: Once initiated, reviewers receive a Domino notification informing them of the model review request. Reviewers can follow the link provided in the notification message that takes them to the model card details for the specific model version.

-

Review the model: Reviewers examine the sections of the model card relevant to their role and responsibility. Reviewers can test the model via the embedded Model APIs, explore the experiment details, or even open a workspace to dive deeper into the training context. The review workflow continues until all reviewers are satisfied and can approve the model. Once all reviews are marked approved, the model automatically transitions to the target stage.

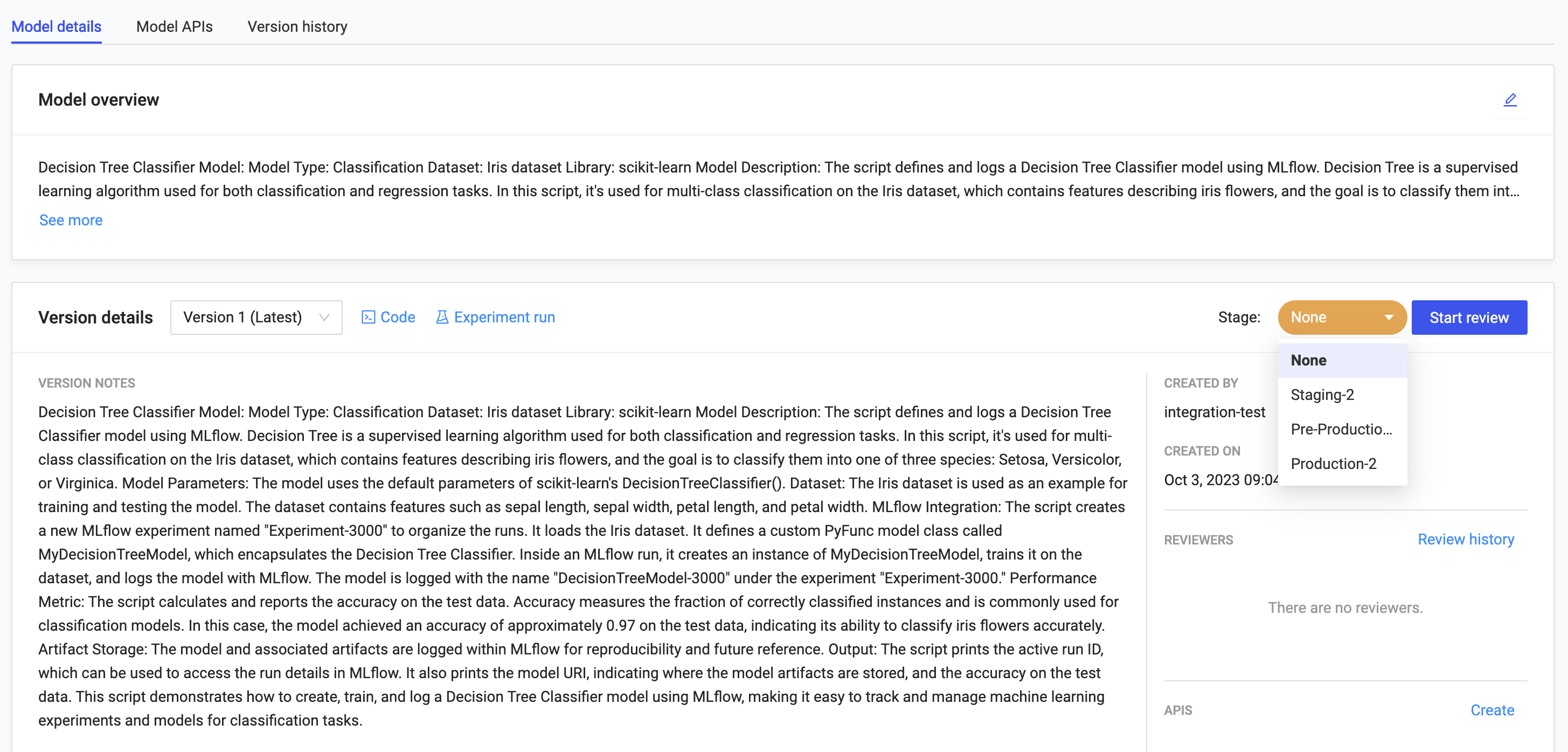

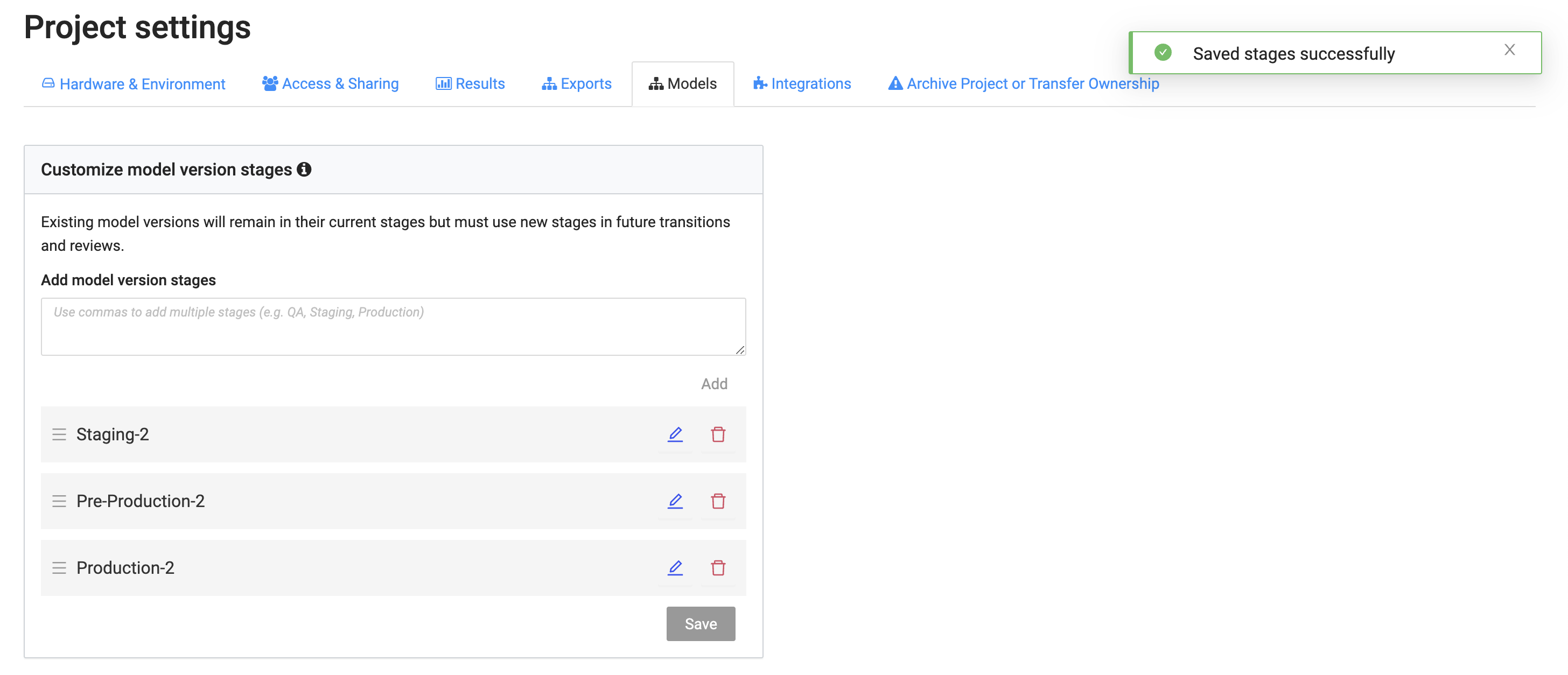

Domino supports custom stages for model versions so that you can expand on MLflow’s default stages of Staging and Production giving you the flexibility to mold the review process to your needs.

For example, you could add model version stages like Pre-Production or Staging-2 to add more granularity to your model reviews.

You must be a Domino admin or a project owner to define custom stages.

|

Note

|

|

Global stages settings

Domino admins can modify global custom stages for all Projects in the organization.

To set global stages:

-

Go to the Admin Panel > Advanced > Central Config.

-

Add record com.cerebro.domino.registeredmodels.stages with the desired set of comma separated global custom stage values.

-

Restart services to apply the changes.

Project stage settings

A Project owner can override the global custom stages for a specific Project. The Project-level custom stages apply to all model versions in the Project.

For example, we start with the MLflow defaults ("Staging" and "Production"). An admin can override these system defaults with global custom stages ("Staging", "Pre-Production", and "Production"). A project owner can override the global custom stages with project-level custom stages ("Staging-2", "Pre-Production-2", and "Production-2").

To set Project stages:

-

Go to Project > Project Settings > Models.

-

Edit the Custom model version stages.

See a log of previous stage transitions, review requests, and review responses in the activity log. Find links to a model version’s activity log in the Reviewers section of its model card or in the Activity column of the version history table.

-

Export a model as a container to serve as a prediction endpoint in your own production environment

-

Export via one of our integrated solutions for SageMaker, NVIDIA Fleet Command, or Snowflake